BullSequana AI platform is a modular, open-source-aligned software stack for running production AI workloads, from LLM serving and RAG to fine-tuning and classical ML.

Unlike locked-in cloud AI services, it runs wherever you need it: on your existing GPU servers, on sovereign or private cloud infrastructure, or on purpose-built BullSequana hardware.

Built by the sole EU-based AI hardware manufacturer, the platform brings enterprise-grade AI capabilities to organisations that demand full control over their data, models, and infrastructure choices.

![]()

Deploy the BullSequana AI Platform on your current servers: NVIDIA, AMD, or mixed environments.

No hardware purchase required. Add enterprise-grade model management, serving, and MLOps to infrastructure you already own.

![]()

White-label a production-ready AI platform for your sovereign cloud offering.

Open-source foundations, IaC deployment scripts, and multi-tenant architecture let you stand up AI services for your customers without building from scratch.

![]()

Run multiple AI workloads (GenAI, classical ML, computer vision, document AI) on a single unified platform.

Two-tier architecture (Core + Pro) scales from inference-only deployments to full MLOps lifecycle management.

![]()

Complement your Databricks Lakehouse with a sovereign AI runtime. The platform shares compatible architecture and components, minimising migration friction while keeping sensitive inference workloads entirely on-premise or in your sovereign cloud.

Everything you need to serve and manage GenAI models in production.

LLM serving engine

Infer models at scale and expose them through OpenAI-compatible APIs. Optimised for throughput and latency by dedicated benchmark teams.

Model management

Register, version, deploy, and monitor any GenAI model. Support for open-source models (Mistral, Llama, Qwen, DeepSeek) and commercial models from any registry.

RAG enablement

Full templated embedding pipelines and Vector DB support (Milvus) for retrieval-augmented generation. Connect your enterprise knowledge base in hours.

Available with limited/self-service support or full enterprise-grade SLA.

For organisations running advanced AI use cases that go beyond inference.

Everything in Core, plus:

Data management

Create and curate training datasets. Manage metadata for natural language queries on your data. Efficient data movement tooling for large-scale operations.

Fine-tuning & MLOps

Full AI lifecycle support: train, fine-tune, evaluate, and deploy. Specialise models on your domain data. Hybridise classical ML and GenAI in unified pipelines.

Includes full L3 editor support, guaranteed SLA with 24/7 option, software releases & updates, and on-call troubleshooting.

Unlike locked-in cloud AI services, BullSequana AI Platform runs wherever you need it.

On BullSequana hardware (optimised)

Purpose-built AI servers from the BullSequana family (AI 220, AI 620, AI 640) with L40S, H100, or H200 GPUs. Factory-optimised for maximum performance. Available as a bundled appliance via GenAI in a Box.

On your existing infrastructure (BYOH)

Deploy on any NVIDIA GPU-equipped servers you already own. The platform is hardware-agnostic at the software level — bring your own H100s, A100s, or L40S from any OEM.

On sovereign / private cloud

Run on OpenShift, bare metal, or private cloud environments. Infrastructure as Code (Terraform + Ansible) scripts enable deployment in minutes. Ideal for sovereign hyperscalers building localised AI offerings.

Hybrid deployments

Combine on-premise inference with cloud-based training, or distribute workloads across multiple sites. The platform's modular architecture supports mixed deployment topologies.

The platform is built on open-source components and is fully model-agnostic. No dependency on a single model provider, GPU vendor, or framework

Built by the sole EU-based AI hardware manufacturer. Keep all data, models, and inference in-house or within your sovereign cloud. Full GDPR, HIPAA, and data residency compliance.

Start with Core (inference + model management) and upgrade to Pro (fine-tuning + MLOps) as your AI maturity grows. Pay only for what you need.

Infrastructure as Code scripts deploy the platform in minutes. No months-long integration projects. Certified delivery teams available for complex environments.

Runs on BullSequana servers or your existing GPU infrastructure. No hardware lock-in. Bring your own servers, bring your own GPUs.

Not just GenAI. Combine LLM serving with classical ML, computer vision, document AI, and anomaly detection in unified pipelines. One platform for all AI workloads.

Full L3 support, guaranteed SLAs, 24/7 on-call option, and continuous software updates. Built for production, not prototypes.

![]()

From hardware to virtualization platform, ensuring sovereignty, compliance, and full auditability through open-source engineering.

![]()

Robust and future-proof virtualization solution delivering 60–80% lower TCO compared to traditional options.

![]()

Flexible configurations with BullSequana SH or BullSequana SA to meet diverse workload needs.

![]()

Lightweight hypervisor and optimized design for maximum VM-to-core ratio.

![]()

Full supply chain transparency, advanced hardware protection, and trusted execution technologies.

![]()

Reduced power consumption per workload with eco-conscious infrastructure design and smart VM scheduling.

![]()

Modular, composable hardware with API-driven orchestration for seamless scalability.

![]()

An already validated reference architecture and a SPOC for hardware-software integration and support.

Limited/self-service support:

From 25k EUR/year (on BullSequana HW)

From 40k EUR/year (on third-party HW)

Enterprise support:

From 75k EUR/year (on BullSequana HW)

From 120k EUR/year (on third-party HW)

Enterprise support:

From 125k EUR/year (on BullSequana HW)

From 225k EUR/year (on third-party HW)

Small

AI 220, 4× L40S

From 50k EUR

Medium

AI 220/620, 4× H100 NVL

From 145k EUR

Large

AI 640, 8× H200

From 310k EUR

Custom configurations, consulting packages, and volume terms available on request.

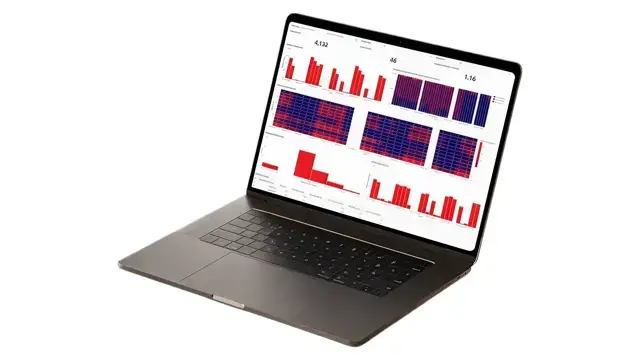

Our portfolio of 300+ enterprise AI projects since 2016 spans healthcare fraud detection (85% accuracy across billions of documents), service desk automation (20% faster query processing), and national AI strategy implementations for governments and global brands.

Communication Inspector

Audit 100% of client calls, chats, and emails with LLM-driven compliance analysis.

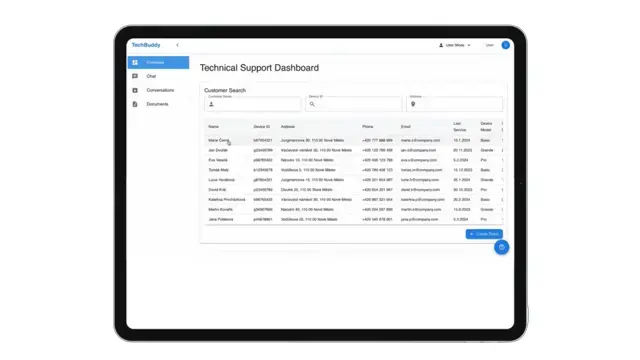

TechBuddy

AI-powered technical assistant for manufacturing and service teams, using RAG on manuals, tickets, and SOPs.

ClaimSense

AI-native claims processing platform achieving 10× faster resolution for insurance teams.

Public healthcare agency

85% accuracy in fraud detection across billions of documents using hybrid classical-ML and GenAI pipelines on the platform.

Enterprise service desk

20% reduction in query processing time, helping users find relevant solutions and procedures faster with AI-assisted search.

Global enterprise clients

Trusted by organisations including L'Oréal, AXA, and government agencies implementing national AI strategies. Delivered by a team of 250+ certified enterprise AI experts.

Whether you're building an AI practice on existing infrastructure, launching a sovereign cloud AI offering, or looking for a Databricks-compatible on-premise runtime, BullSequana AI Platform gives you enterprise-grade capabilities without enterprise-grade lock-in. Open-source foundations. Modular architecture. Your infrastructure, your models, your terms.

GenAI in a Box is a complete turnkey appliance: hardware, platform software, and services shipped as one package. The BullSequana AI Platform is the software component, available independently for organisations that already have GPU infrastructure or want to deploy on sovereign cloud environments.

Yes. The platform is hardware-agnostic at the software level. It runs on any NVIDIA GPU-equipped servers, whether from BullSequana, Dell, HPE, Supermicro, or other OEMs.

Yes. The platform shares compatible architecture and components with the Databricks ecosystem, minimising migration friction for organisations looking to complement their Lakehouse with sovereign AI inference and fine-tuning capabilities.

The platform is model-agnostic. It supports any model from any registry, including Mistral, Llama, Qwen, DeepSeek, and custom fine-tuned models. Both open-source and commercial models are supported.

Yes. The modular architecture and IaC deployment scripts are designed for multi-tenant environments. Contact our team for sovereign cloud partnership terms.

Yes. Platform subscriptions on BullSequana hardware carry a lower fee because the hardware purchase is part of the overall commercial relationship. On third-party hardware, the platform carries the full subscription cost. See pricing section for details.