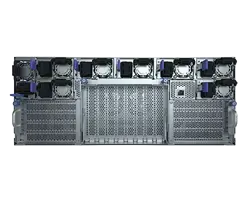

The BullSequana AI 642-H03 is an AI-optimised compute node designed to empower industries, national HPC centres, government agencies, academia, and start-ups to run accelerated, low-latency AI inference and precision fine-tuning for mid-sized language models (~100M–10B parameters) and multimodal AI workloads.

This turnkey solution provides flexibility and efficiency for both rapid brownfield rollouts and greenfield deployments.

Supports up to 48 AMD Instinct™ MI355X GPUs per rack delivering 64 TB of global GPU bandwidth for high-speed communication and direct memory exchange.

Features hybrid cooling for improved energy efficiency and sustained performance, with up to 70% direct liquid cooling (DLC) and 30% air cooling via fans.

With its fully pre-integrated, pre-tested, and pre-configured design, the BullSequana AI 642-H03 server offers fast deployment, streamlined installation, and reduces space and costs.

The GPUs supports a wide range of precision formats for training and inference and offer 2.3TB HBM3e with 288GB onboard memory per GPU.

AI developers and system administrators can leverage AMD ROCm 7 and Bull’s HPC Suite for optimised model performance, unified management, and sustainable, cost-effective AI operations.

![]()

Bull is one of Europe’s leading supercomputer manufacturers

![]()

Our experienced team serves customers around the world

![]()

We deliver systems from design to deployment

![]()

Holistic solutions through R&D, delivery and partner technology

![]()

From hardware to virtualization platform, ensuring sovereignty, compliance, and full auditability through open-source engineering.

![]()

Robust and future-proof virtualization solution delivering 60–80% lower TCO compared to traditional options.

![]()

Flexible configurations with BullSequana SH or BullSequana SA to meet diverse workload needs.

![]()

Lightweight hypervisor and optimized design for maximum VM-to-core ratio.

![]()

Full supply chain transparency, advanced hardware protection, and trusted execution technologies.

![]()

Reduced power consumption per workload with eco-conscious infrastructure design and smart VM scheduling.

![]()

Modular, composable hardware with API-driven orchestration for seamless scalability.

![]()

An already validated reference architecture and a SPOC for hardware-software integration and support.